Here’s what most AI teams actually know about their production workloads: the model is responding. That’s roughly it. Not whether the response was right, or which team drove last month’s API overage, or why the hallucination rate quietly climbed after the last model version change.

Gartner’s data puts it at 15% of GenAI deployments currently having LLM observability in place, which means the other 85% are running AI in production without real visibility into whether outputs are accurate, safe, or costing what they should. McKinsey’s 2024 State of AI survey puts a consequence on that gap: 44% of GenAI adopters reported at least one negative outcome in the past year, with inaccurate results topping the list. These weren’t experiments. They were production deployments touching real decisions.

General Observability Wasn’t Built for This

Traditional observability was designed around a specific set of questions.

- Is the server up?

- Why is the database slow?

- Where did this request die in the microservice chain?

You answer those by collecting metrics, logs, and traces, routing them somewhere useful, and building dashboards your team will actually check.

That undeniably works for conventional software. However, LLMs break the model a little.

The problem is that “is the model running?” and “is the model performing well?” are completely different questions. A language model can return a 200 response and still hallucinate a drug interaction, generate biased output, or quietly drain your API budget with oversized prompts. Standard observability doesn’t catch any of that, largely because it only monitors whether the engine is running, not what it’s actually doing with the fuel. That’s a narrower problem than it sounds until your AI workloads move closer to production and start genuinely touching business decisions. At that point, the cost of not knowing compounds quickly, and it tends to do so in ways that aren’t immediately obvious until something has already gone badly wrong.

What AI Observability Actually Tracks

Most teams underestimate how wide the monitoring surface actually is. Five layers, each with its own failure mode. Miss one and you won’t know until something breaks.

Token usage is where cost management actually lives. Every LLM call burns input tokens and generates output tokens, and the ratio between them tells you whether your prompts are efficient or just expensive. A server bill tells you the total. Token-level tracking tells you which team, which model, and which request type is driving it, and that’s a different kind of useful.

Quality evaluation is worth understanding before you build alerting around it. The basic approach runs outputs from your primary model through a secondary LLM, which scores them on things like factual accuracy and safety on a 0.0 to 1.0 scale. You set the thresholds, you get the alerts. It misses things, subtle domain errors, cases where the evaluator simply doesn’t have enough context to judge correctly. But catching most problems automatically is still better than waiting for a user complaint to tell you something went wrong three weeks ago.

Vector database monitoring is the layer that RAG teams consistently underestimate until a latency spike shows up and nobody knows where it started. The model or the vector store are different problems with different fixes, and without instrumentation across both, you’re tracing the wrong thing.

Model Context Protocol monitoring tends to be added last, roughly when teams find out how unreliable LLM-to-tool interactions actually are. It’s not theoretical fragility. It just stays invisible until something breaks.

GPU monitoring rounds it out for self-hosted deployments. Utilization, temperature, memory allocation, and power consumption. Most infrastructure teams treat this as a hardware concern. In practice, GPU bottlenecks show up directly in response times and cost per request, and you can’t connect those dots without mapping hardware metrics to application-level performance.

Miss any of these layers, and you’ll have blind spots that only announce themselves at the worst possible moment, which is always in production, always on a Friday.

Where the Telemetry Comes From

Instrumentation for standard applications follows a path that most engineering teams know: OpenTelemetry SDKs, Prometheus exporters, and the typical collection-and-routing setup. AI workloads add a different tool.

OpenLIT is a Python SDK built specifically for LLM instrumentation. Drop openlit.init() in your application’s entry point, and it automatically starts capturing token counts, prompt costs, and request traces across providers, including OpenAI, Anthropic, AWS Bedrock, Google Gemini, and Azure OpenAI. Instrumentation for vector databases in Pinecone and Chroma is included. You’re not manually wrapping every model call. The SDK handles it.

That telemetry flows into Grafana through Alloy, which acts as the central collector in the stack. Metrics go to Prometheus, logs to Loki, and traces land in Tempo. Once the data lands in Grafana, signal correlation becomes possible: the jump from a metric spike to the specific trace responsible for it is one click, not twenty minutes of cross-referencing disconnected tools. For teams used to that kind of manual investigation, the difference is significant.

The Cost Problem Nobody Talks About Honestly

The month-end bill is the wrong instrument for this problem. It tells you the total went up. It doesn’t tell you which team caused it, which feature was responsible, or whether the model you used was even necessary for what it was doing. By the time that number shows up in a finance review, the conversation about where it came from is already difficult.

Token-level tracking changes the timeline, but only if the tagging was set up correctly before anything went to production. Every request carrying metadata, environment, cost center, model version, means you can break down spending by department at any point in the billing cycle, not just after the invoice arrives. The teams that get this wrong at instrumentation time spend months trying to backfill data that was never captured cleanly. It’s a specific kind of painful, and it’s almost always avoidable.

The less obvious benefit is what the data surfaces once you actually have it. Input-to-output token ratios across thousands of requests show things nobody was actively looking for, prompts running longer than they need to, expensive model versions handling work a lighter option would do just as well, redundant calls a cache would have covered. You can’t optimize any of that without being able to see it. Most teams are optimizing blindly, which is why the savings from proper instrumentation often surprise people.

Wiring It All Together: The Observability Pipeline

Getting a production-grade AI observability stack running is not a weekend project. The individual components are documented. Getting them to work together end-to-end, without gaps in telemetry or misrouted signals, takes real engineering time and usually more iterations than the first estimate suggested.

The list of things that can go wrong is longer than it looks. Configuring Grafana Alloy to collect, transform, and route telemetry without dropping the signal. Setting up OTLP endpoints. Writing PromQL, LogQL, and TraceQL queries that surface something useful rather than just confirming the data is flowing. Enabling Exemplars so a metric spike leads somewhere, rather than just sitting there. Extracting qualitative signals from logs, hallucination scores, and safety violations through regex pattern matching. Building dashboards that put GPU memory, LLM latency, and cost per request on the same view so you can see the relationship between them.

Each of those has failure modes that aren’t obvious until you hit them. Teams that work through it early build something they can rely on. The ones that defer it do the same work later, faster, under worse conditions.

Grafana Enterprise with Amazon Managed Prometheus removes some of that burden. The storage and query infrastructure is managed, which matters when you’re dealing with the telemetry volume that complex AI workloads generate, especially across multiple model providers. It’s not a shortcut to getting the architecture right, but it’s one fewer thing to operate.

Why Tagging Strategy Is the Foundation

Most teams underestimate this part, and it’s the one that’s hardest to fix later.

Every piece of telemetry, token counts, latency measurements, hallucination scores, and GPU temperatures is significantly more useful when it carries consistent metadata. Project ID, cost center, environment, model version, provider, team. Without those labels, you can answer aggregate questions. With them, you can answer operational ones.

The difference matters more than it sounds. “How much did we spend on LLM calls last month?” is a finance question. “How much did the product recommendation feature spend on GPT-4o calls in staging versus production, broken down by team, for the last 90 days?” is a question that helps someone actually fix something. The tagging is what makes the second question answerable.

The schema needs to exist before instrumentation starts. Not after, not retrofitted, before. Built into the Alloy configuration so that anything arriving untagged gets caught and enriched rather than stored as noise. Teams that try to add this later describe it as one of the more frustrating parts of the AI build. The ones that get it right up front barely notice it was a decision at all.

What Proper Visibility Actually Changes

When this stack is working, a few things shift in how teams operate.

Latency spikes become diagnosable rather than mysterious. Previously, a sudden increase in AI response times meant checking multiple tools and hoping the right data was somewhere. Now, correlating signals from metrics, logs, and traces tells you whether the spike came from the LLM provider, the vector database, GPU memory limits, or a timeout in an MCP tool call. Each of those points leads to a different fix, and you can’t get there without the signal.

Cost attribution is the other shift. FinOps teams stop relying on end-of-month invoices and start catching unexpected spend in real time. It sounds incremental. In practice, it changes who gets pulled into the conversation and when.

Quality issues are harder to describe because they’re slow to surface. A hallucination rate drifting upward after a model version change, safety scores quietly dropping on one request type, bias scores shifting after a prompt engineering change that nobody flagged; none of it is obvious in the moment. It accumulates. The teams with visibility catch it before users do. The ones without it hear about it differently.

The tooling exists, and the integrations are solid. Getting it right is an engineering investment, not a research problem. The work is in instrumentation, tagging, query design, and dashboard architecture. Teams that make it tend to find out why it mattered faster than they expected.

Most teams don’t realize their observability stack wasn’t built for AI workloads during planning. It usually shows up later, something breaks in a way nobody can explain, or a cost spike lands in the monthly bill, and the conversation about where it came from goes nowhere. By then, you’re already behind.

This Is Where We Come In

AI observability is not a one-time setup. The gaps shift as models change, usage grows, and new providers get added. Most teams that come to us have something running already, token tracking, maybe a quality eval pipeline, but the pieces don’t connect the way they need to. Tagging that wasn’t designed for attribution. Traces that don’t tie back to cost. Quality scores sit in a separate tool that nobody checks regularly.

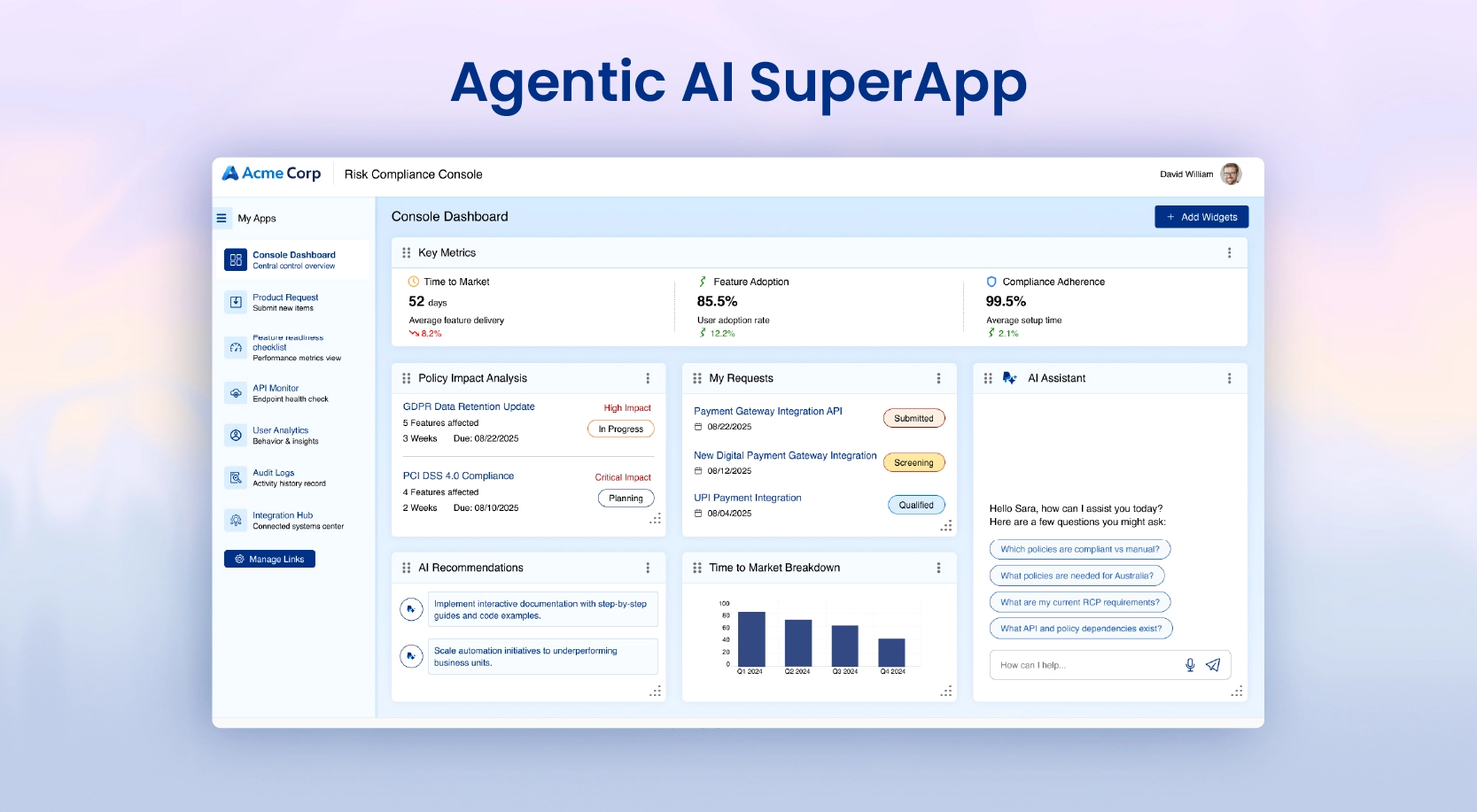

A leading global technology company building a secure Agentic AI platform for their 60+ employees found this out the hard way. The model was slow, token costs were climbing, there was no real-time streaming, and the governance layer wasn’t holding up at scale. None of it showed up as a crisis early on. It just quietly made everything worse. WinWire redesigned the solution on Azure OpenAI, Azure Monitor, and Azure Cognitive Search, reduced API calls, improved response times, added guardrails, and helped cut costs. That kind of improvement doesn’t come from better hardware. It comes from finally being able to see what’s happening.

WinWire works with organizations at any stage, from initial instrumentation to fixing what’s already partially in place. We use OpenTelemetry-native tooling that works with your existing stack, not against it. If you’re not sure where your blind spots are, that’s usually a good place to start the conversation.