Leadership teams typically have plenty of AI ideas, but their main challenge is turning those ideas into results. The numbers from 2025 tell an uncomfortable story. S&P Global found that 42% of companies abandoned most of their AI initiatives last year, more than double the 17% who did so the year before. The average organization quietly scrapped nearly half its POC before it ever reached production. BCG’s research lands even harder: 60% of companies generated no material value from AI despite real, sustained investment. Only 5% created value at scale.

So, the gap isn’t between companies that are trying and companies that aren’t. Almost everyone is trying. The gap is between organizations that keep launching things and organizations that actually finish them. Data was messier than expected, governance issues surfaced, costs were underestimated, and priorities shifted.

It usually begins with enthusiasm, a pilot is launched, and quickly inspires similar projects across departments. Soon, several initiatives will be running at once, each promising big outcomes by Q3. Yet when Q3 arrives, only a few deliver. Most delay quietly, creating a backlog. Then comes the tougher question: which projects deserve continued investment?

Moving a project to production isn’t simply scaling up a demo. Clean data, robust governance, and a solid cost model are essential. Many pilots never get this far; they’re praised for potential, shelved when priorities change, and replaced by fresh ideas. The organization stays busy but doesn’t make meaningful progress. The pattern is consistent enough that it has a name now: pilot purgatory. Projects that are too promising to kill but too fragile to ship. Prioritization & ROI Scoring Mechanism (PRISM) exists to break that loop by forcing the hard questions before a single line of code gets written.

Prioritization & ROI Scoring Mechanism (PRISM) – Agentic AI Prioritization Framework

WinWire’s PRISM (Prioritization and ROI Scoring Mechanism) is part of WinWire’s Agentic AI @ Scale Playbook. It offers leadership a structured process to evaluate and rank AI use cases before any development starts, highlighting which projects drive business impact versus those that merely consume resources.

Why Selection Often Fails

Agentic AI initiates actions and workflows in real systems. Early mistakes do more than waste time; they can lock in flawed processes, create unexpected data risks, and erode trust in the program. Rebuilding credibility takes longer than building the system.

Despite these risks, most use case selection remains informal. Teams often choose based on what’s easiest to demo, available clean data, or whichever stakeholder pushes hardest. These criteria rarely withstand budget scrutiny, leaving pilots unprepared when tough questions arise. Work pauses, new candidates emerge, and the core issue persists: a lack of a dependable method for deciding what should be built.

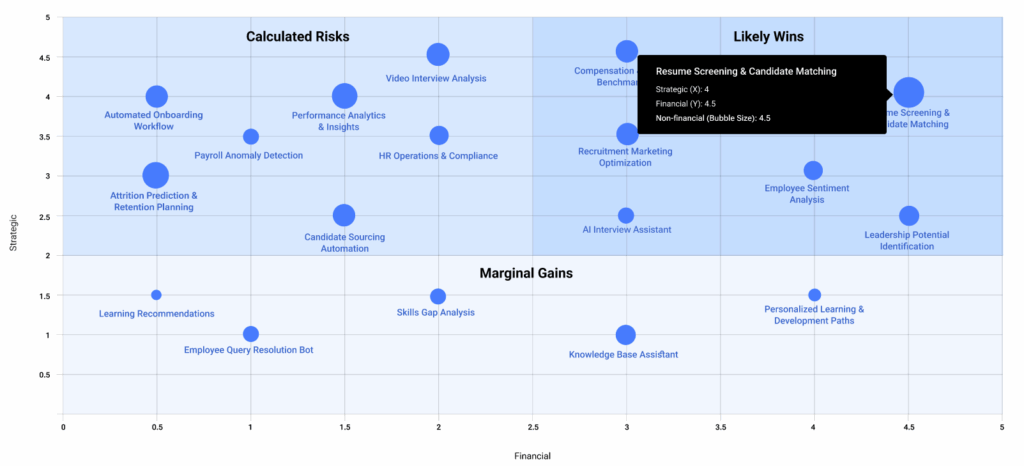

How PRISM Identifies High-Impact Agentic AI Opportunities Across the Enterprise

PRISM evaluates every candidate’s use case across 3 critical areas:

- Leadership relevance: Projects must align with metrics accountable to senior leaders, ensuring they solve real problems.

- Scalability: Use cases should extend beyond individual teams, benefiting the wider organization.

- Cost and trust: Considerations like controls, governance, auditability, and infrastructure expenses determine production readiness.

Mini scorecard example: A “Customer support ticket triage agent” scored high on leadership relevance and scalability, but scored low on trust and cost because it needed access to sensitive customer data and had no clear audit trail. It stayed in the backlog until role-based access, logging, and a cost ceiling were defined, at which point it moved into the MVP phase.

By focusing on these aspects, conversations shift from debating interesting ideas to defending projects that can withstand external scrutiny.

Results Delivered

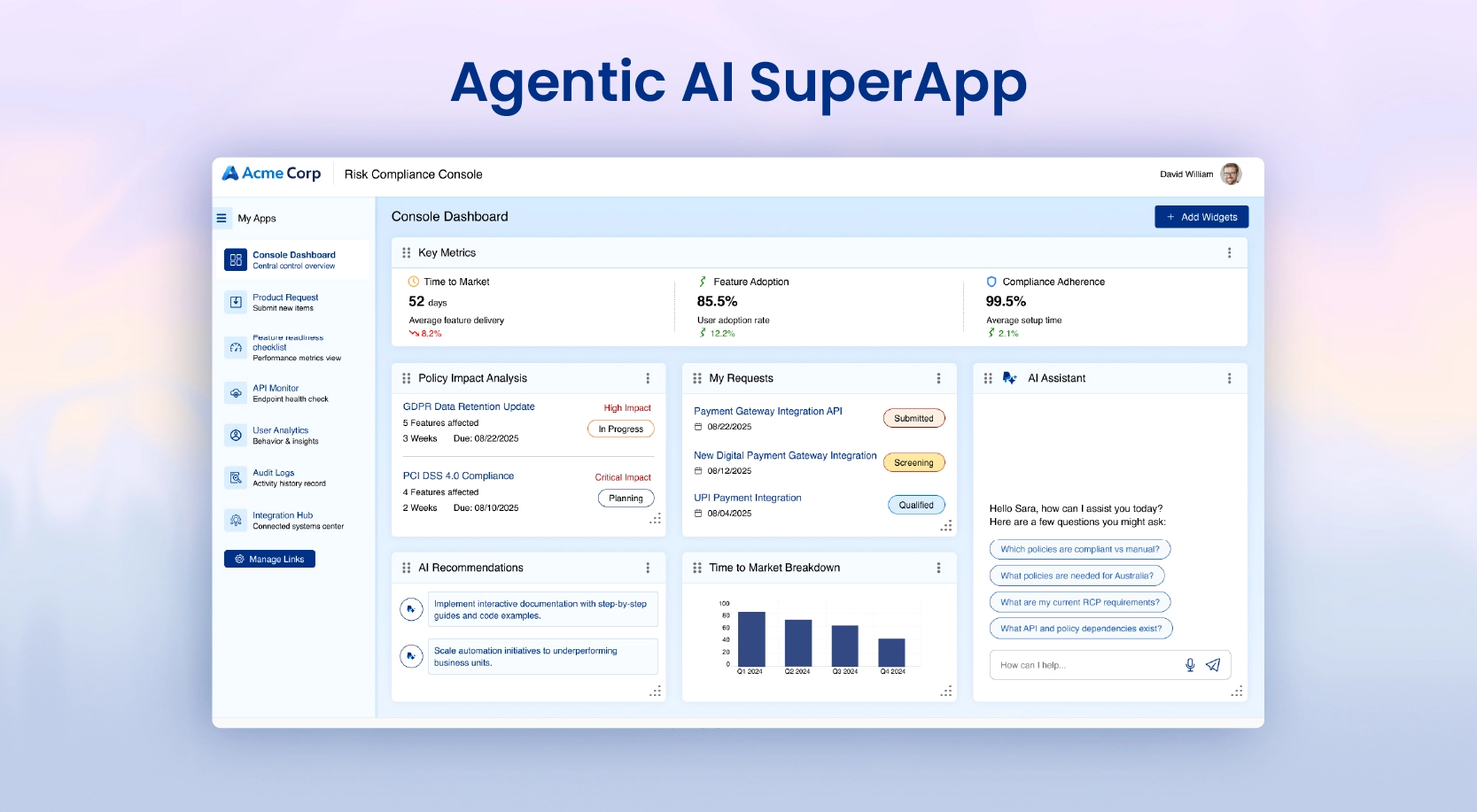

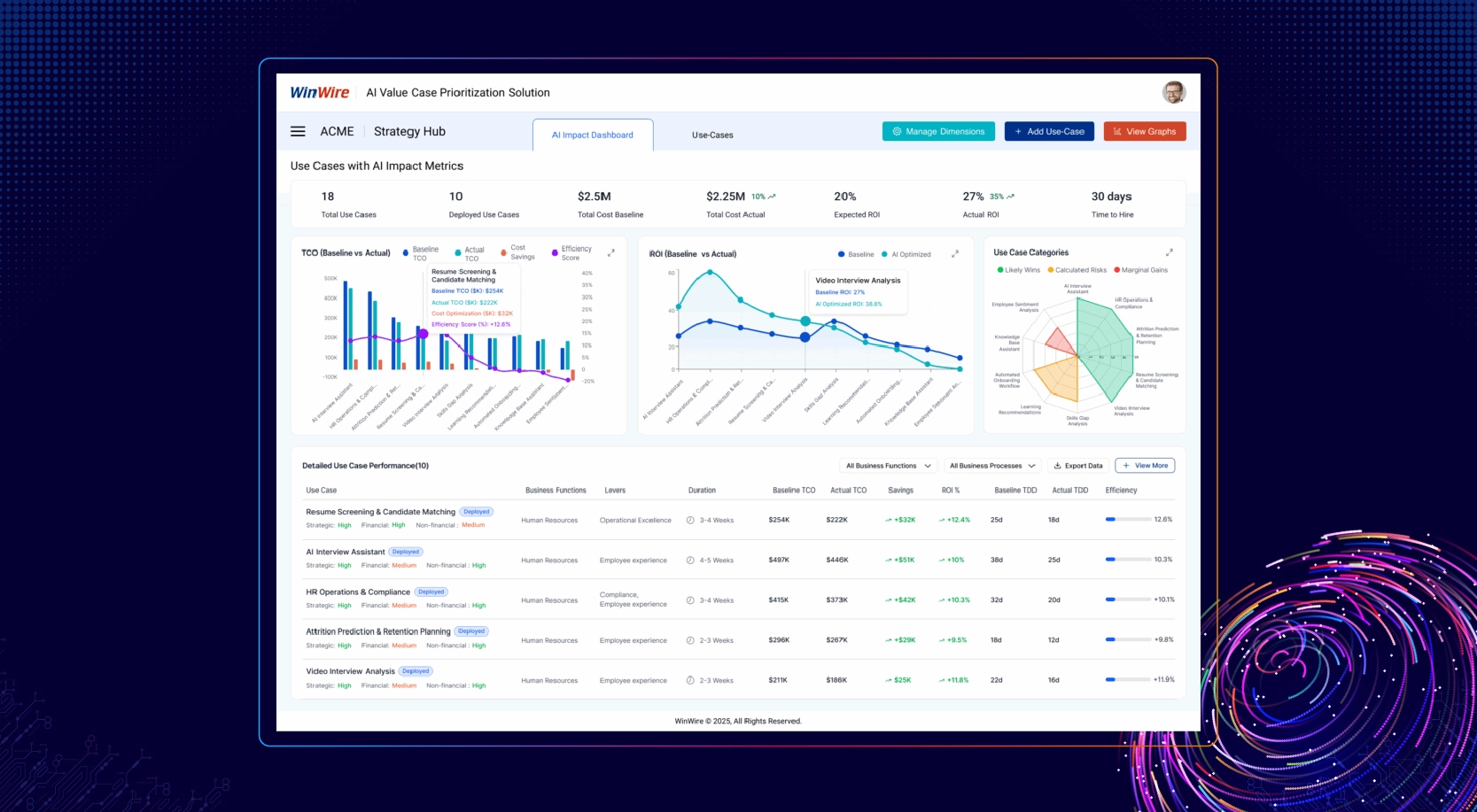

PRISM generates actionable tools for teams, including an AI Impact Dashboard for post-launch visibility, a Scorecard comparing use cases, a Prioritization Heatmap highlighting trade-offs, Top 10 Value Cases mapped to KPIs, a Consumption Plan with realistic cost and usage figures, a Quick MVP Plan for rapid build execution, and integrated monitoring for ongoing measurement.

How PRIMS Score and Rank Agentic AI Use Cases for Maximum ROI

PRISM sits inside WinWire’s 3i framework, and each phase has a real job to do. Imagine is where the long list gets cut down honestly, not based on enthusiasm but on what the business actually needs to move. Ignite takes what survived that cut and turns it into something you can show, quickly, with a scope tight enough that the team doesn’t burn out proving it. Impact is where the program stops being a set of experiments and starts compounding, each win making the next one faster to land.

What makes this repeatable in practice is the tooling the Playbook puts around it. AgentVerse gives teams 100+ pre-built WinAgents to work from rather than build from zero. The WinAI Factory Model means what worked in one business unit doesn’t have to be reinvented in the next. Governance, Change Management, KnowledgeBase, AgenticSDLC, and AgenticOps keep the controls and the technical foundation solid as the program grows into new territory.

From Pilot to Scale: Customer Stories Powered by PRISM

- Global Technology Company (62,000 Employees): PRISM helped the team lock the non-negotiables for production early, especially access separation, audit trails, and guardrails. The Azure-based platform launched with department-level controls and real-time responses, and API costs fell by 3x, making it viable for a workforce-wide rollout.

- Healthcare Provider (200+ Locations): PRISM made the call upfront: without a unified data foundation, AI would stall. After migrating to Databricks and standardizing analytics across the network, decision-support tools moved forward with confidence, and IT costs dropped by 70%.

Getting Started

Begin by clarifying your AI vision, identifying use cases, and engaging stakeholders responsible for relevant outcomes. Apply PRISM to your backlog and focus on a select few projects with strong prospects. Choosing two or three well-vetted projects yields better results than pursuing ten uncertain ones. Each win builds credibility, paving the way for further investment and setting a standard others will follow.

PRISM doesn’t guarantee returns, but it gives decision-makers something vital: a shared method for approving or rejecting projects, with direct ties between development and intended outcomes. Most organizations have no shortage of ideas. What’s missing is the connection that turns those ideas into results. PRISM provides that link.

Want to see how PRISM applies to your AI backlog? Talk to WinWire’s team and get a prioritization assessment built around your specific use cases and business objectives